Internet Archive

This article is a stub. You can help the IndieWeb wiki by expanding it.

The Internet Archive is a non-profit organization that is building a digital library, including archival copy of much of the public web, and since 2019 is the primary location the IndieWeb community hosts videos of IndieWebCamp and Summit sessions.

The Internet Archive's Wayback Machine allows you to look up past versions of a URL and submit a URL to be captured as it exists currently.

How to

Upload an Archive

The Internet Archive wants to create a library of publicly available files. If you have legal rights to share they will host your content. Create an account and upload your files.

When you upload files you make a page each item. An item can contain multiple files. A concert, for example, would be one item with each song in a file.

To "hotlink" to the files, to embed a podcast for example, you need to use the following http://www.archive.org/download/[IDENTIFIER]/[FILENAME] instead of https://archive.org/details/[IDENTIFIER]/[FILENAME]

To upload files to an existing page you need to edit the item. Directions for editing items

Trigger an Archive

Simple API

You can tell archive.org to crawl and archive a specific URL immediately. Assuming you have curl installed, run this code in a command line:

curl -I https://web.archive.org/save/{url to archive} | grep ^location

and you'll get a response like:

location: /web/20160715203015/http://indieweb.org

(We have a wiki entry for the -I option for curl.)

The response includes the path to the archived page on web.archive.org. Append this path to https://web.archive.org to build the final URL for the archived page.

NOTE: As of some time around 2020-08 this (the /save path) stopped working, with archive.org returning an error that says "You need to be logged in to use Save Page Now." and then sometime in 2022 (maybe 2021?) it started working again (verified 2023-005 with a manual load), however is somewhat rate-limited (experimental results vary), so you may want to prioritize using it for new links first, and have an async delayed process for archiving the rest.

Trigger Archive in PHP

PHP snippet to call the curl_exec() function with appropriate options/params to trigger an archive:

$options =

array(CURLOPT_URL => ('https://web.archive.org/save/' . $url_to_save),

CURLOPT_HEADER => true,

CURLOPT_RETURNTRANSFER => true,

CURLOPT_USERAGENT => "YOUR_CMS_NAME_HERE");

$ch = curl_init();

curl_setopt_array($ch, $options);

$response = curl_exec($ch);

$info = curl_getinfo($ch);

curl_close($ch);

You can then check $info['http_code'] for a numeric HTTP return code to see if there was an error (e.g. >= 400) and take action accordingly.

Trigger Archive in Python (with requests)

This method requires the 'requests' Python library.

pip install requests

import requests

# fire and forget archive.org call

try:

verify = requests.get(

'%s%s' % ('https://web.archive.org/save/', target),

allow_redirects=False,

timeout=30,

)

except:

pass

Trigger Archive in Ruby

This is a Ruby method that triggers archiving by the Internet Archive for a given URL. It returns the URL that you can visit to see the archived page. It uses the rest-client Ruby gem to make a GET request.

require 'rest-client'

def web_archive(url)

archive_request_response = RestClient.get("https://web.archive.org/save/#{url}")

"https://web.archive.org" + archive_request_response.headers[:content_location]

end

Trigger Archive in Browser

You can manually prepend https://web.archive.org/save/ to a URL to save it on-demand. You can also set up a bookmarklet to archive the current page: [1]

javascript:var w=window.open('https://web.archive.org/save/'+location.href,'', 'scrollbars=1,status=0,resizable=1,location=0,toolbar=0');

Alternate API

There is a new API documented in a Google Doc

- As of 2023-01-06, the simpler API above appears to work fine. Leaving this more complex alternative here in case someone can verify that it’s more reliable, to justify the effort. Any takers? —

Barnaby Walters

Barnaby Walters

Capture request

SPN2 runs on https://web.archive.org/save which requires authentication using two alternative methods: S3 API Keys (highly preferable). Get your account’s keys at https://archive.org/account/s3.php Use HTTP Header "authorization: LOW $accesskey:$secret" in your requests. Cookies: Get logged-in-sig and logged-in-user from your browser when you log in to https://archive.org and add them to your SPN2 HTTP requests. Cookies are not desirable because they tend to expire after a few days so you would need to login again to archive.org to get new cookies.

There is a limit of 7 concurrent save sessions per user.

To capture a web page via the API, you can use an HTTP POST or GET request as follows:

curl -X POST -H "Accept: application/json" -H “Authorization: LOW myaccesskey:mysecret” -d'url=http://brewster.kahle.org/' https://web.archive.org/save or curl -X GET -H "Accept: application/json" --cookie "logged-in-sig=AAAAAAAAAA;logged-in-user=user1%40archive.org;" https://web.archive.org/save/http://brewster.kahle.org/

The method described below is supposed to call through to the new API and enqueue it, but it may be slower - the current quoted backlog is 671 minutes

Retrieve the oldest available version of a URL

URL="http://example.com"

wget -O- -q "http://web.archive.org/web/timemap/link/${URL}" | grep "first memento" | sed -r "s/^<([^>]+)>;.*$/\1/"

Known

(new!) There is a Known plugin to auto-archive your posts and edits to your posts, as well as pages that you bookmark:

- https://www.marcus-povey.co.uk/2017/02/02/archive-org-wayback-machine-support-for-known/

- source: https://github.com/mapkyca/KnownWaybackMachine

Limitation(?)

- Appears to NOT archive the links in your posts, except for bookmark posts.

- SHOULD: Archive all pages you link to, e.g. all pages that you (even attempt to e.g. do discovery on to) send Webmentions to.

- Github issue: https://github.com/mapkyca/KnownWaybackMachine/issues/1 FIXED

WordPress

There is a useful little plugin Post Archival in the Internet Archive which will not only archive the user's post, but will also archive all the links within the post. All of the author's plugins were closed in 2019, and it is no longer maintained or installable through the WordPress admin interface, but the source is still available.

Micro.blog

Micro.blog has a setting (off by default) to send new blog posts to the Internet Archive. It does not currently archive other links found in the blog post.

IndieWeb Examples

Jeremy Keith

![]() Jeremy Keith has been pinging web.archive.org/save to archive pages that adactio.com posts link to since 2016-09-26

Jeremy Keith has been pinging web.archive.org/save to archive pages that adactio.com posts link to since 2016-09-26

Aaron Parecki

Aaron Parecki has been pinging web.archive.org/save with every URL that a Webmention is sent to since 2016-09-26

Aaron Parecki has been pinging web.archive.org/save with every URL that a Webmention is sent to since 2016-09-26

Tantek

Tantek Çelik implemented (2016-11-13) pinging web.archive.org/save with every URL in a link/reply-to and has been doing it from tantek.com since 2016-11-15, using the Trigger Archive in PHP technique noted above.

Tantek Çelik implemented (2016-11-13) pinging web.archive.org/save with every URL in a link/reply-to and has been doing it from tantek.com since 2016-11-15, using the Trigger Archive in PHP technique noted above.

Chris Aldrich

Chris Aldrich implemented (2017-01-07) pinging the archive with URLs of both his own posts as well as links within posts using Post Archival in the Internet Archive.

Chris Aldrich implemented (2017-01-07) pinging the archive with URLs of both his own posts as well as links within posts using Post Archival in the Internet Archive.

svgur

Kevin Marks implemented pinging the archive with URLs of the SVG display posts, and thus indirectly the SVGs themselves

Kevin Marks implemented pinging the archive with URLs of the SVG display posts, and thus indirectly the SVGs themselves

mention-tech

Kevin Marks implemented (2017-01-24) pinging the archive with both the source and target URLs of the webmention so that they get preserved too.

Kevin Marks implemented (2017-01-24) pinging the archive with both the source and target URLs of the webmention so that they get preserved too.

Jonathan LaCour

Jonathan LaCour implemented on 4/04 (HAH!) in 2018 using the Known plugin.

Jonathan LaCour implemented on 4/04 (HAH!) in 2018 using the Known plugin.

Requests

- Telegraph support - https://github.com/aaronpk/Telegraph/issues/14 - should support auto-archiving for any sent Webmention

Downtime

Internet Archive downtime incidents are rare, but they do happen:

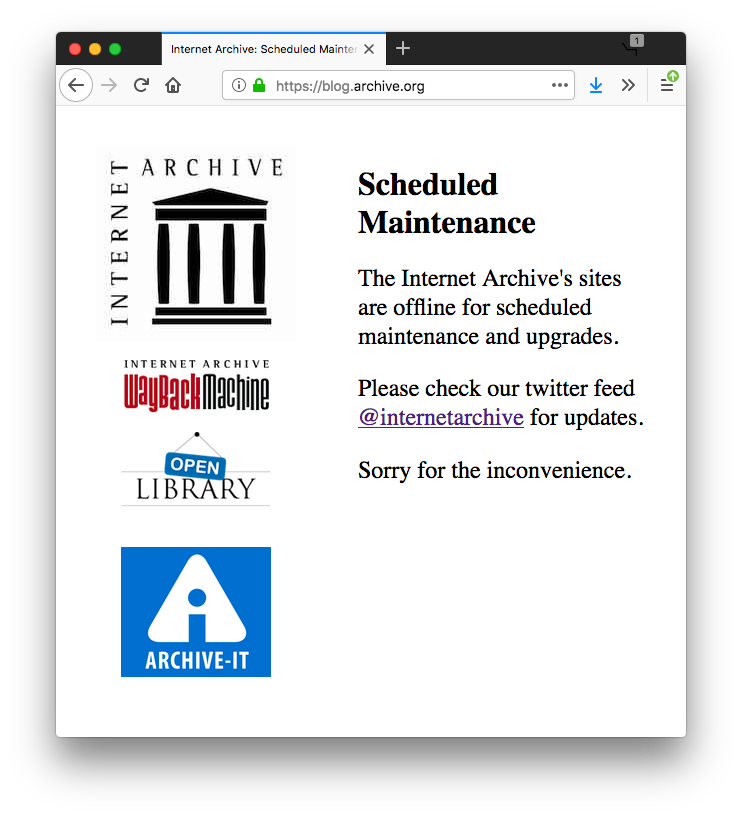

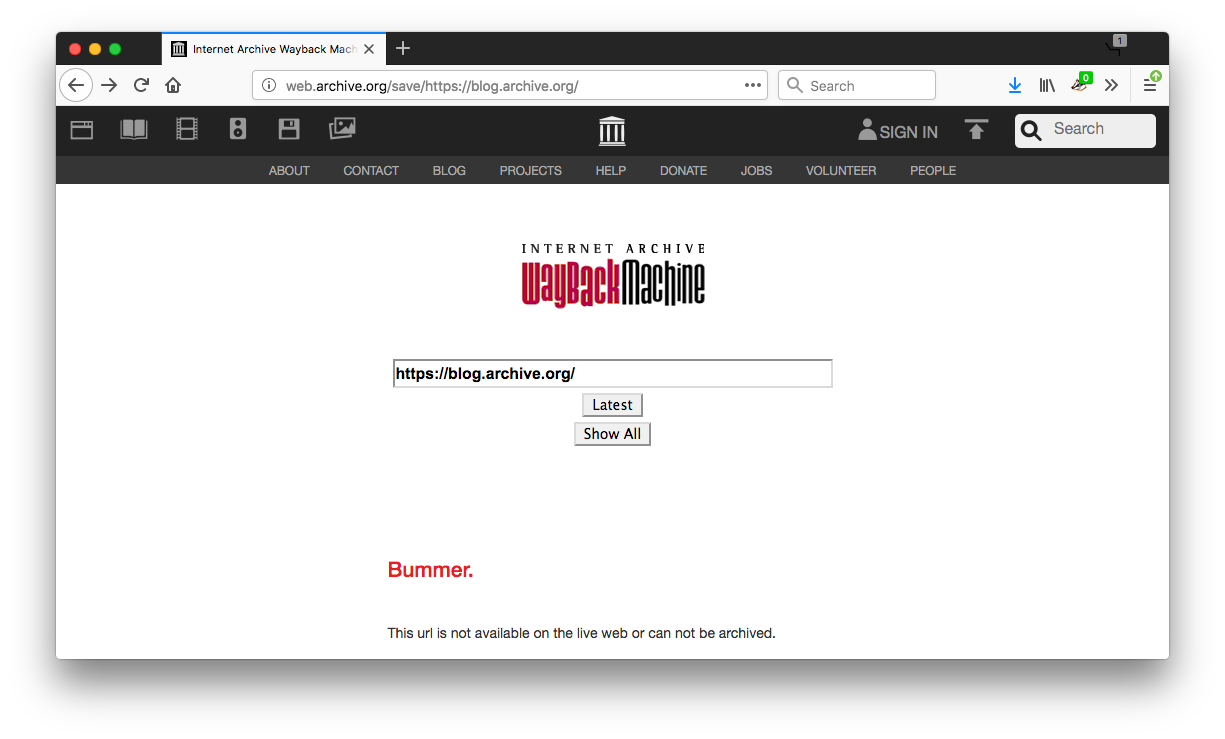

2019-04-23 outage

At least the blog:

And attempting to archive that page failed!

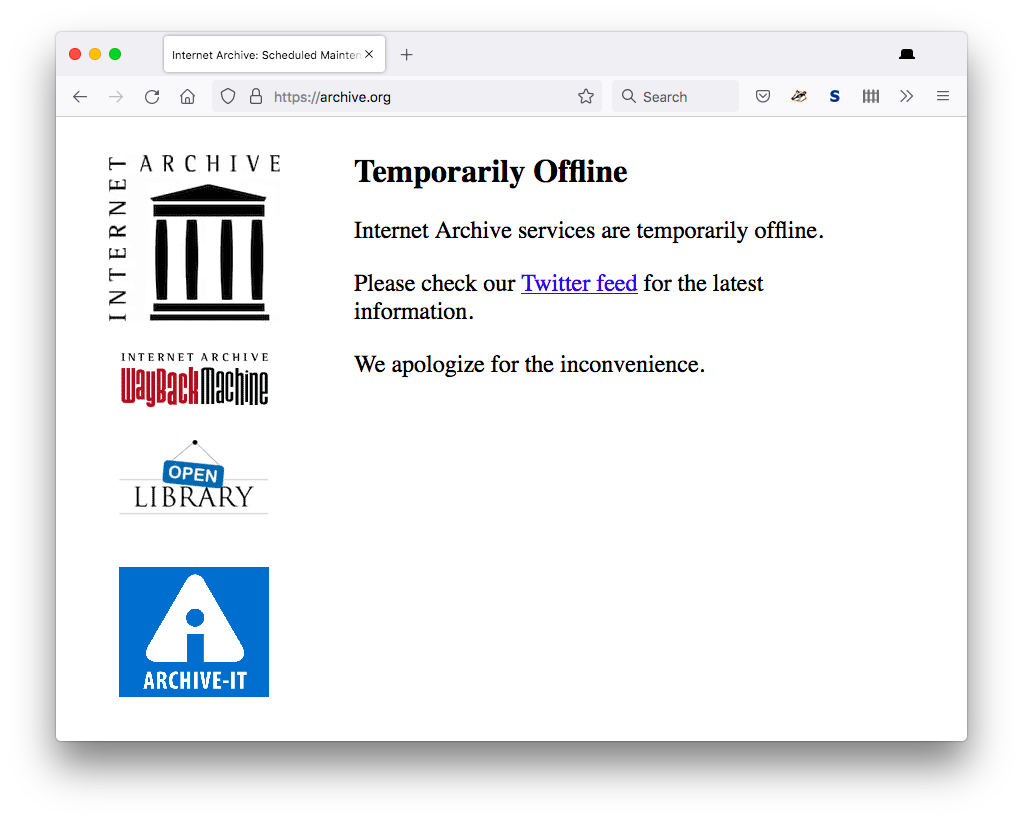

2022-01-14 outage

Top level Archive.org down as well as Wayback Machine:

See Also

- archival copy

- indiearchive

- Link Archiver on Twitter

- ...

- 2018-11-28 When the Internet Archive Forgets

- Outage: https://twitter.com/internetarchive/status/1143378990826004480

- "http://archive.org is down right now due to an unexpected outage. we are working to get it back up as soon as possible." @internetarchive June 25, 2019

- https://archive.org/details/@indieweb

- Post about the save page changes https://blog.archive.org/2019/10/23/the-wayback-machines-save-page-now-is-new-and-improved/

- Runs several open source software sites/services at subdomains, like https://blog.archive.org/ (WordPress), https://git.archive.org/ (GitLab), and https://mastodon.archive.org/ (Mastodon)

- 2023-05-29 brief outage: https://blog.archive.org/2023/05/29/let-us-serve-you-but-dont-bring-us-down/

- downtime: 2023-12-05 https://archive.org/ said

Temporarily Offline

Internet Archive services are temporarily offline.

Please check our Twitter feed for the latest information.

We apologize for the inconvenience. - ^ https://twitter.com/textfiles/status/1732192819098292677

Power has gone out at the @internetarchive

- Brainstorming: use-case for auto-archiving your own posts via

gRegor Morrill in dev chat:

gRegor Morrill in dev chat: automatic link to the IA archive on older posts like "see what this post looked like when published."